Large Language Models (LLMs) are transforming how engineers work. They can interpret complex reports, draft documentation, and write code in seconds. But what happens when we ask them to perform critical engineering calculations? Can you trace an AI’s math back to a published standard? Can you verify which equations it actually used? Can you confidently defend its output in a design review?

Today, the honest answer is: not reliably. LLMs have gotten remarkably capable: they can search the web, run calculations, and even cite references. But for critical engineering work, the bar isn’t can it find a formula; it’s can you guarantee which formula it used, and will it use the same one next time? That deterministic traceability is exactly what’s missing due to the statistical nature of LLMs.

Enter GeoMCP. This new approach is built specifically to bridge that gap for analytical calculations in geotechnical engineering, but the methodology is applicable to any engineering discipline that relies on published standards and verified formulas. You can find the link to the GeoMCP paper at the bottom of this article.

The Problem: The trust gap

So what does “trustworthy” actually mean for an engineering calculation? In practice, it means three things: you can trace every formula back to a specific published source, you can reproduce the exact same result tomorrow, and you can defend your choice of method under professional scrutiny. This is the bar and it’s non-negotiable for safety-critical design.

LLMs don’t clear it, not because they lack capability, but because of how they work. When an LLM performs a calculation, it reconstructs formulas from patterns learned during training rather than reading from a verified, citable source. Even when it does use and cite a source via web search, there is no guarantee it would use the same source or the same formula variant on its own tomorrow. In short, the calculation may be competent, but it isn’t auditable in the way engineering practice requires.

This matters whether you’re sizing a foundation, checking a steel connection, or computing hydraulic capacity. The specific discipline changes; the need for traceable, reproducible, auditable calculations does not. So, how do we bridge the gap between AI’s reasoning capabilities and engineering’s need for strict determinism?

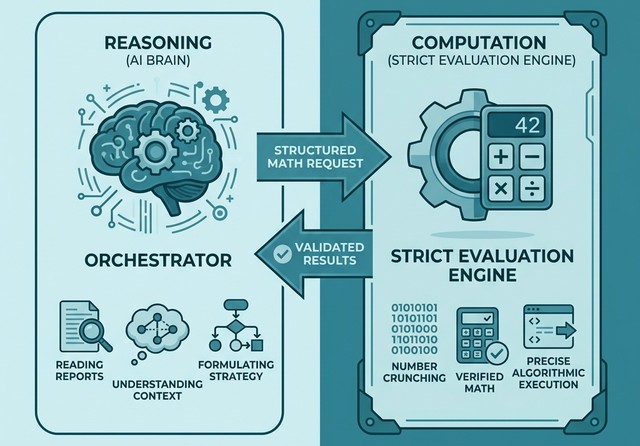

The Solution: Let AI orchestrate, not calculate

The insight behind GeoMCP is simple: don’t let the LLM do the math. Let it do what it’s genuinely good at, understanding the engineering context, and hand the computation to verified, deterministic tools. The LLM orchestrates; the tools calculate. This separation is what makes the result trustworthy.

Four components make this work:

- Method Cards. Every analytical method is captured as a structured, human-readable file declaring exact equations, variables, units, and literature sources. Any engineer can verify the formulas and trace them to a published source. What you see is what gets evaluated.

- A Deterministic Evaluation Engine. The formulas aren’t hard-coded in software; they live in the method cards. The engine is a general-purpose executor that reads the cards, checks dimensional consistency, and prevents any unverified code from running. Same inputs, same result, every time.

- The Model Context Protocol (MCP). MCP is an open standard that lets applications expose their capabilities as discoverable tools. GeoMCP implements MCP, which means any AI assistant, such as Claude, ChatGPT, Gemini, etc., can instantly find and use its verified calculators without custom integration.

- Agent Skills. Structured workflows that teach an AI to think like an engineer, assessing inputs and selecting the right method before any math happens, and validating the results afterward.

Proof It Works: Validating against Eurocode 7

Does it actually work? We validated GeoMCP against the official JRC Eurocode 7 worked examples published by the European Commission, testing a strip foundation design across all three Design Approaches. The results matched the authoritative reference values to high precision across all bearing capacity factors, design actions, and design resistances. Full validation tables and the complete AI-assisted analysis walkthrough are available in the paper.

Why bother when you already have verified tools?

Practicing engineers already have spreadsheet templates, commercial software or similar for their routine analytical calculations. These tools are verified, time-tested, and they work. So, what is the point of using AI for analytical methods and building systems like GeoMCP?

The answer becomes clear when you zoom out. Analytical calculations don’t exist in isolation. A real geotechnical engineering project flows from ground investigation interpretation through analytical design checks to numerical simulations and reporting. Today, each of these steps lives in its own silo: a different tool, a different format, a different manual handoff. AI has the potential to automate and connect these workflows, but only if the tools along the chain can communicate with each other in a standardized, machine-readable way.

That’s what AI-readiness means. Your spreadsheet may be perfectly correct, but it can’t easily be discovered, invoked, or orchestrated by an AI assistant. GeoMCP doesn’t replace existing tools; it makes analytical calculations a first-class participant in an automatable engineering pipeline. And the same principle applies to every other step in the chain: ground models, numerical solvers, design code checks. The productivity gains of AI in engineering won’t come from any single tool getting smarter but rather from making our tools interoperable and AI-ready.

Read the paper

Want to dig into the details? Read the full paper: GeoMCP: A Trustworthy Framework for AI-Assisted Analytical Geotechnical Engineering

How do you see LLMs fitting into rigorous technical workflows? We at SINTEF would love to hear your thoughts.

Comments

No comments yet. Be the first to comment!